Pruna AI Open Sources Model Optimization Framework

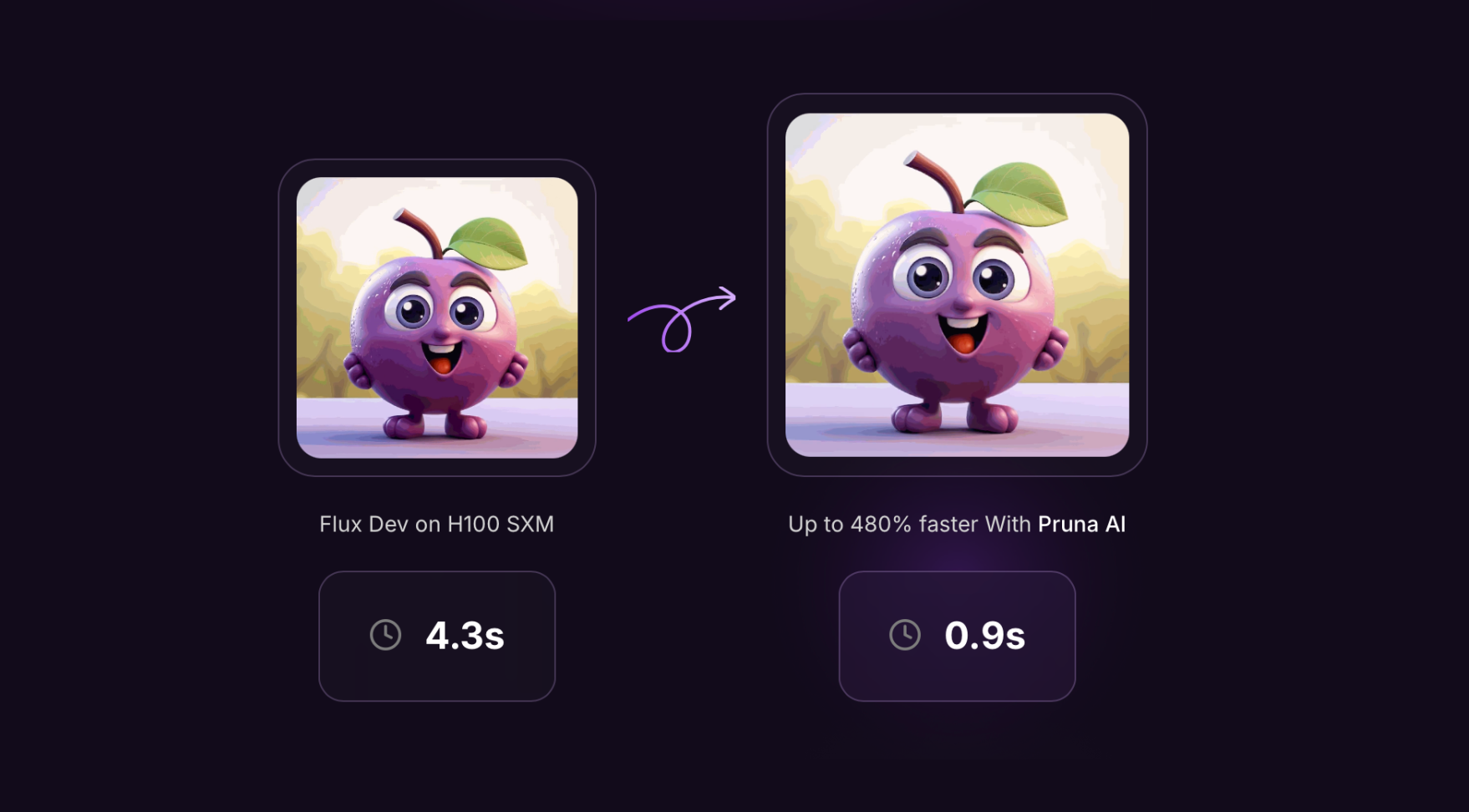

Pruna AI has open-sourced its AI model optimization framework, which applies various efficiency methods such as caching, pruning, quantization, and distillation to AI models. This move was announced on their social media. The framework is designed to standardize the process of saving, loading, and evaluating compressed models, ensuring minimal quality loss while enhancing performance.

The framework supports a wide range of models, including large language models, diffusion models, and computer vision models, with a current focus on image and video generation models. Pruna AI's enterprise offering includes advanced features like an optimization agent that automates the compression process based on user-defined parameters.

Pruna AI's open-source initiative aims to make AI more accessible and sustainable by providing a comprehensive suite of compression algorithms. The company also offers a pro version with additional optimization features, charging by the hour similar to cloud GPU rentals.

We hope you enjoyed this article.

Consider subscribing to one of our newsletters like Daily AI Brief.

Also, consider following us on social media:

Subscribe to Daily AI Brief

Daily report covering major AI developments and industry news, with both top stories and complete market updates

Market report

AI’s Time-to-Market Quagmire: Why Enterprises Struggle to Scale AI Innovation

The 2025 AI Governance Benchmark Report by ModelOp provides insights from 100 senior AI and data leaders across various industries, highlighting the challenges enterprises face in scaling AI initiatives. The report emphasizes the importance of AI governance and automation in overcoming fragmented systems and inconsistent practices, showcasing how early adoption correlates with faster deployment and stronger ROI.

Read more